2026-02-26

What Is Analysis Latency?

The hidden delay between your data and your decisions and how to close it.

Loading article...

2026-02-26

The hidden delay between your data and your decisions and how to close it.

Loading article...

Every organisation today has more data than it knows what to do with. And yet the moment something unexpected appears, most teams still wait for days to understand why and act on it.

Decision latency is a compounding chain of three distinct delays, each silently eroding value:

| Stage | What happens | Typical delay |

|---|---|---|

| Data Capture latency | The lag between the event occurring and data being collected and stored | Minutes → Days |

| Analysis latency | Time to process, query, model, and interpret the data | Hours → Weeks |

| Action latency | Time between insight being available and a human acting on it | Days → Months |

Modern infrastructure fixed the first problem. Streaming pipelines, real-time warehouses, event-driven architectures. These have pushed capture latency from days to seconds for many organisations. But the two stages - Analysis and Action latency - are still human-paced.

Analysis latency is the critical time lag between data becoming available and teams gaining actionable insights

Historically, analytics required a specific stack of skills: writing SQL, understanding schemas, interpreting statistical outputs, waiting on analysts. This model introduces unavoidable bottlenecks:

A Question like “Which of our top accounts haven't logged in this week?” triggers:

Dashboard check → message to analyst → Writing SQL across multiple tables → Spreadsheet share → Follow-up clarifications

What should take 20 minutes takes 2–5 days. Multiply that across teams and weeks.

IDC Research shows ~80% of analyst time goes into searching, cleaning, joining, and validating data — not actual insight generation. So expensive talent is doing plumbing and business teams are waiting.

Dashboards answer what someone anticipated, they don’t handle follow-up questions, cross-table reasoning or hypothesis testing. You can have clean dashboards and still move slowly.

Three fundamental shifts happened:

Skills that took years to acquire - writing SQL, interpreting statistical outputs, building models - can now be replicated by anyone with a well-formed question. The knowledge is embedded in the model.

Databases store tables like orders_fact and customer_dim.

Businesses think in terms like “repeat customers,” “revenue leakage,” and “activation rate.”

Someone always had to translate between the two. A unified semantic and context layer changes this. Business definitions, metric logic, entity relationships, and domain knowledge become encoded directly into the data context layer.

This enables a true natural-language BI interface — where marketing, finance, operations, and product teams can ask questions directly and get answers instantly.

Even the best analysts are limited by cognitive load. Large Language models ingest structured tables, unstructured documents, business rules and synthesize them into coherent explanations.

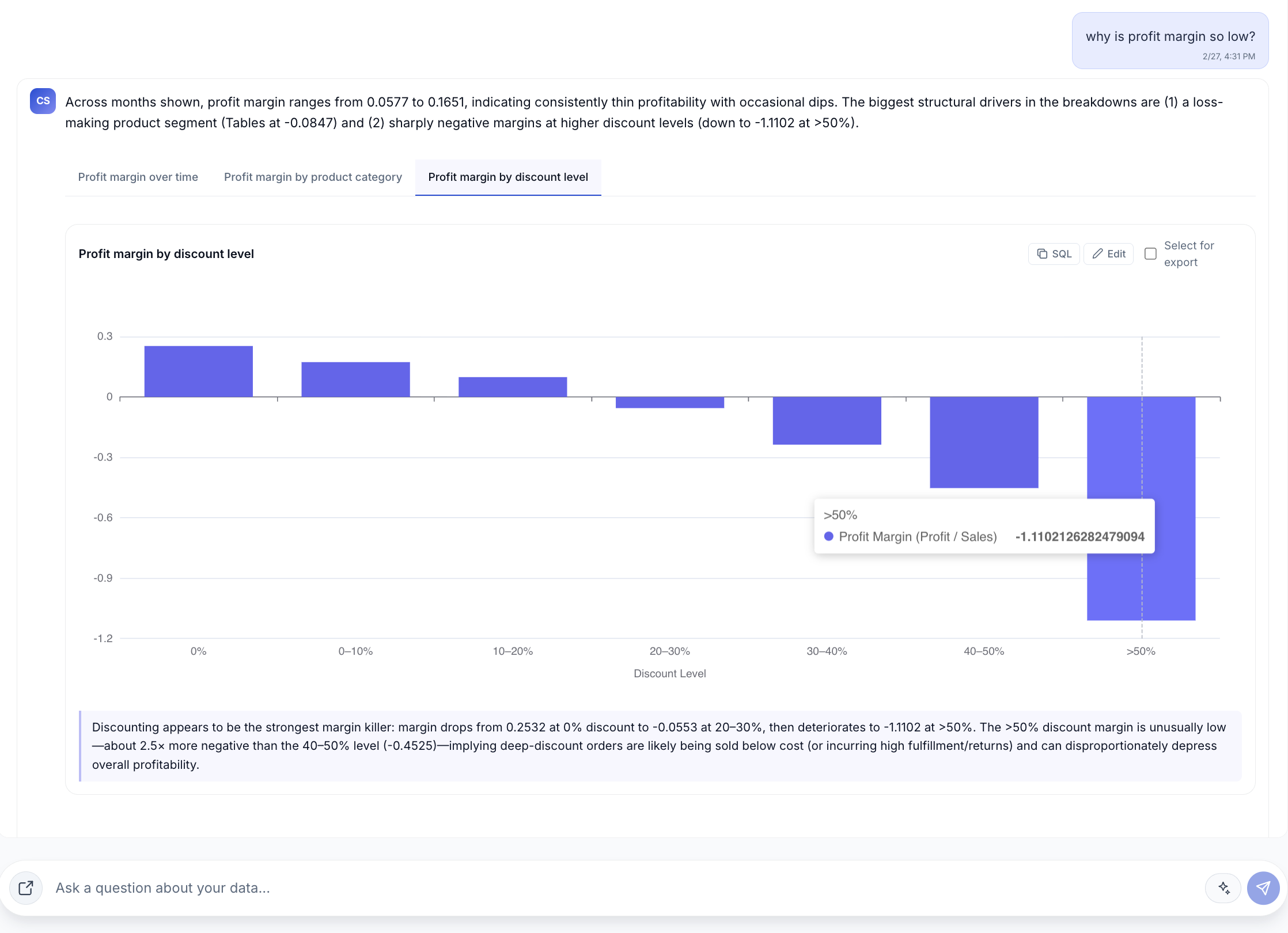

Let’s say you’re analyzing Superstore sales data. A category manager asks a single question: "Why is profit margin so low?"

The system returned a full diagnosis in seconds...

The manager didn't need to ask for three follow-up cuts. The system surfaced the breakdown by category, by discount level, and over time. The next question wasn't "can you dig deeper?" It was "which segment are we doing this for?"

That's the shift. Not faster reporting. Faster diagnosis.

Speed compounds.

A business that makes a decision in two hours, observes the result, and adjusts within the same operational period will consistently outperform one making the same quality decision a week later.

Gartner's research shows that organisations treating AI as a strategic capability, not a productivity tool, have outperformed their peers by 80% over the past nine years. That's the compounding return of a faster learning loop.

Not every decision needs to move faster. A large retailer doesn't need to know how many shirts sold in the last ten seconds. But knowing which product lines are being discounted below cost? That needs to surface in hours, not the quarterly review.

The right question isn't "how do we reduce all latency?" It's "which decisions are time-sensitive enough that a week's delay is actively costing us?"

Look for decisions that are:

That's where analysis latency is costing the most. And compressing it creates the most leverage.

Cubesite is a context-aware analytics layer that sits between your data warehouse and your team. Connect Snowflake, BigQuery, PostgreSQL, or Google Sheets — and ask your first question in plain English, with every answer traced back to the raw data that produced it.